Ensuring Reliability in Flux Balance Analysis: A Complete Guide to Confidence Estimation and Robust Predictions for Drug Development

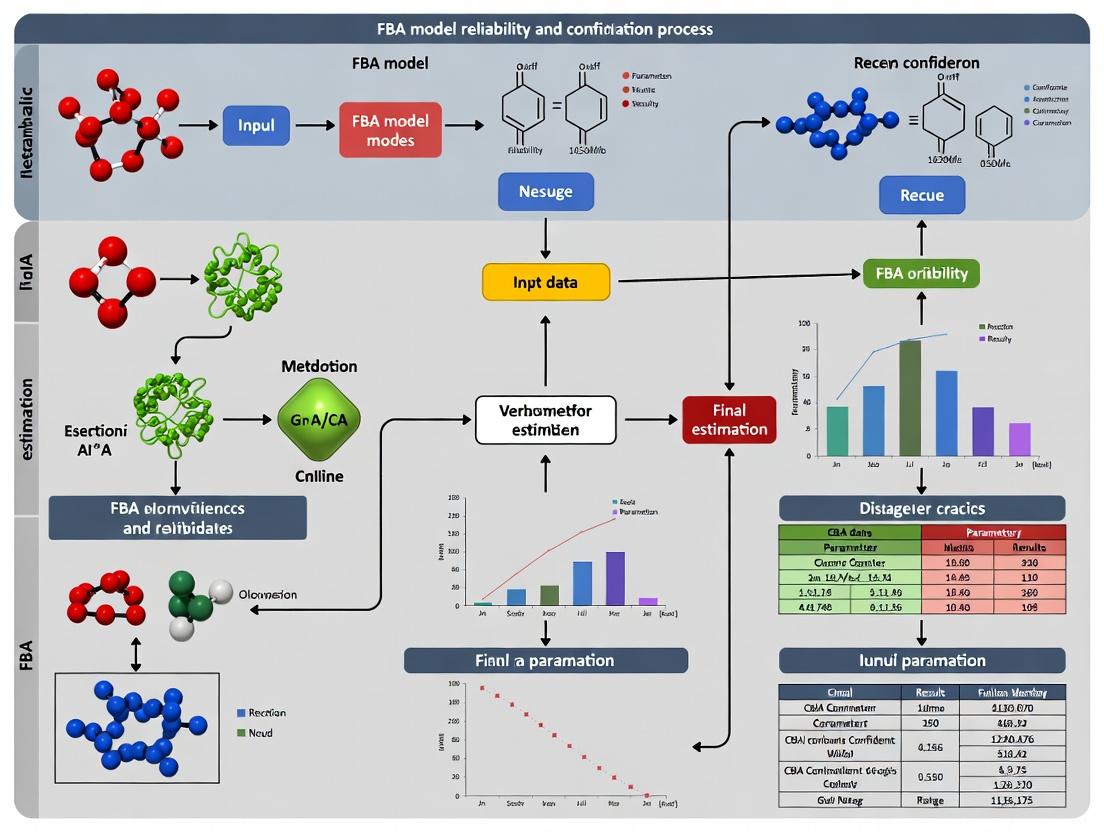

This article provides a comprehensive guide for researchers and drug development professionals on assessing and ensuring the reliability of Flux Balance Analysis (FBA) models.

Ensuring Reliability in Flux Balance Analysis: A Complete Guide to Confidence Estimation and Robust Predictions for Drug Development

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on assessing and ensuring the reliability of Flux Balance Analysis (FBA) models. We explore the foundational principles of FBA, including core constraints and biological assumptions. We detail methodological approaches for confidence estimation, such as flux variability analysis (FVA) and Monte Carlo sampling, highlighting applications in metabolic engineering and drug target identification. The article addresses common pitfalls in model formulation and data integration, offering strategies for troubleshooting and optimization. Finally, we cover validation frameworks, including comparison with omics data and other constraint-based modeling techniques, to equip scientists with the tools needed to generate robust, actionable predictions for biomedical research.

Foundations of FBA: Understanding Core Principles, Assumptions, and Sources of Uncertainty

Flux Balance Analysis (FBA) is a cornerstone mathematical framework in systems biology and metabolic engineering. Its reliability and the confidence in its predictions are critical for translating in silico findings into actionable biological insights, particularly in drug discovery and therapeutic development. This whitepaper details the core mathematical foundations of FBA, its primary objective functions, and protocols for their application, framed within ongoing research aimed at quantifying and improving FBA model prediction confidence.

Core Mathematical Framework

FBA is a constraint-based modeling approach that predicts steady-state metabolic fluxes in a biochemical reaction network. The framework does not require kinetic parameters, instead relying on the stoichiometry of the network and physicochemical constraints.

The Fundamental Mathematical Problem

The core of FBA is a linear programming problem derived from mass conservation and network topology.

The Stoichiometric Matrix (S): An m × n matrix where m is the number of metabolites and n is the number of reactions. Each element Sij is the stoichiometric coefficient of metabolite i in reaction j.

The Flux Vector (v): An n-dimensional vector representing the flux (rate) of each reaction in the network.

The Steady-State Assumption: At steady state, the concentration of internal metabolites does not change. This imposes the linear equality constraint:

S ⋅ v = 0

Flux Capacity Constraints: Each flux v_j is bounded by lower (lb_j) and upper (ub_j) bounds, derived from thermodynamic irreversibility or measured uptake/secretion rates:

lb ≤ v ≤ ub

The Linear Optimization Problem

Given the constraints, FBA finds a flux distribution v that optimizes a biologically relevant objective function Z:

Maximize (or Minimize): Z = c^T ⋅ v Subject to: S ⋅ v = 0 and: lb ≤ v ≤ ub

Here, c is a vector of weights defining the linear objective function.

Key Objective Functions and Their Biological Rationale

The choice of objective function is a critical hypothesis about the presumed evolutionary optimization principle of the biological system. The reliability of an FBA prediction hinges on the appropriateness of this choice.

The most common objective functions are quantified and compared in the table below.

Table 1: Core FBA Objective Functions and Applications

| Objective Function | Mathematical Form (c^T ⋅ v) | Primary Biological Rationale | Typical Application Context | Key Reliability Consideration |

|---|---|---|---|---|

| Biomass Maximization | c_biomass = 1 for biomass reaction, 0 otherwise. | Cellular growth is the primary evolutionary driver for microbes in nutrient-rich conditions. | Microbial growth prediction, metabolic engineering for yield. | May not hold in non-growth conditions (stationary phase, stress). |

| ATP Maximization | c_ATP = 1 for ATP maintenance reaction (ATPM). | Cells may optimize for energy production, especially under energy-limiting conditions. | Analyzing energy metabolism, hypoxic environments. | Often coupled with other objectives; can produce unrealistic cycles. |

| Nutrient Uptake Minimization | Minimize sum of specific uptake fluxes. | Principle of metabolic parsimony: cells use resources efficiently. | Predicting minimal media, understanding regulation. | Sensitive to network gaps and inaccurate bounds. |

| Redox Potential Maximization | c_redox = 1 for reactions producing NADH, NADPH. | Maintaining redox balance is critical for cellular homeostasis. | Studies of oxidative stress, fermentation products. | Difficult to define universally; network must accurately represent redox carriers. |

Experimental Protocol: Validating the Biomass Objective Function

A key experiment for testing model reliability involves comparing in silico growth predictions with in vivo measurements.

Protocol Title: Correlation of In Silico Predicted vs. In Vivo Measured Growth Rates.

- Model Curation: Start with a genome-scale metabolic model (e.g., E. coli iJO1366, human RECON3D).

- Condition Specification: Set the exchange reaction bounds (

lb,ub) to reflect the experimental culture medium's nutrient composition. - Simulation: Perform FBA, maximizing the flux through the model's biomass reaction. Record the predicted optimal growth rate (in units of hr⁻¹).

- Experimental Calibration: In parallel, cultivate the organism/cell line in the specified medium in biological triplicate. Measure the exponential growth rate via optical density (OD600) or cell counting.

- Validation & Confidence Metric: Calculate the Pearson correlation coefficient (R) and the root mean square error (RMSE) between the predicted and measured growth rates across multiple different media conditions. A high R² (>0.8) and low RMSE indicate high model reliability for growth prediction under the tested conditions.

Title: Growth Rate Validation Workflow

Advanced Objective Functions and Confidence Estimation

Research into model reliability explores more complex, context-specific objectives.

Table 2: Advanced and Multi-Objective Formulations

| Formulation | Description | Mathematical Approach | Role in Reliability Research |

|---|---|---|---|

| Parsimonious FBA (pFBA) | Minimizes total enzyme flux while achieving optimal biomass. | Two-step: 1) Max biomass, 2) Min sum of absolute fluxes (∥v∥₁). | Reduces flux redundancy, yielding a more physiologically realistic solution, improving prediction confidence. |

| MoMA (Min. Met. Adj.) | Finds a flux distribution closest to a wild-type (reference) state under new constraints. | Quadratic programming: Minimize ∥v - v_wt∥². | Useful when global optimality is not assumed; models sub-optimal adaptive states (e.g., knockouts). |

| ROOM (Reg. On/Off Min.) | Minimizes significant flux changes (on/off states) from a reference. | Mixed-Integer Linear Programming (MILP). | Predicts regulatory outcomes by minimizing large-scale flux rerouting. |

| Obj. Sampling | Does not optimize a single objective; characterizes the space of feasible solutions. | Random sampling of the solution polytope (S⋅v=0, lb≤v≤ub). | Quantifies prediction uncertainty and identifies high-confidence, invariant reaction fluxes. |

Experimental Protocol: Flux Sampling for Confidence Intervals

This protocol does not yield a single flux prediction but a distribution, enabling confidence estimation.

Protocol Title: Markov Chain Monte Carlo Sampling of the Flux Solution Space.

- Define Constraints: Apply the standard FBA constraints (S⋅v=0, lb≤v≤ub). Optionally, add an additional constraint to define a "biologically relevant" space (e.g., biomass flux ≥ 90% of its maximum).

- Initialize Sampler: Use an algorithm such as the Artificial Centering Hit-and-Run (ACHR) sampler to generate a set of warm-up points.

- Perform Sampling: Conduct a large number of sampling steps (e.g., 100,000) using a Monte Carlo method to randomly walk through the bounded flux solution space (polytope). Each step must satisfy all constraints.

- Collect Samples: After a "burn-in" period, collect flux vectors at regular intervals to ensure statistical independence.

- Analysis & Confidence: For each reaction, plot the distribution of sampled fluxes. Calculate the 95% confidence interval (between 2.5th and 97.5th percentiles). A narrow interval indicates a high-confidence flux prediction, independent of the objective function.

Title: Flux Sampling for Confidence Estimation

Table 3: Key Tools and Resources for FBA Model Development and Validation

| Item / Resource | Category | Function / Purpose |

|---|---|---|

| COBRA Toolbox | Software | The standard MATLAB suite for constraint-based reconstruction and analysis. Contains functions for FBA, pFBA, sampling, and gap-filling. |

| Cobrapy | Software | A Python implementation of COBRA methods, enabling integration with modern machine learning and data science workflows. |

| MEMOTE | Software | A test suite for standardized and automated quality assessment of genome-scale metabolic models, crucial for reliability scoring. |

| AGORA (& Virtual Metabolic Human) | Database | Community-driven, manually curated reconstructions of human/mouse gut microbiota and human metabolism. Provides a reliable starting point for host-microbe interaction studies. |

| Biolog Phenotype MicroArrays | Experimental Reagent | Plates testing growth on hundreds of carbon, nitrogen, and phosphorus sources. Provides high-throughput experimental data for validating model nutrient utilization predictions. |

| 13C-Labeled Substrates (e.g., [1,2-13C]Glucose) | Experimental Reagent | Used in 13C Metabolic Flux Analysis (13C-MFA) to measure in vivo intracellular fluxes experimentally. This data is the gold standard for validating FBA flux predictions. |

| LC-MS / GC-MS | Instrumentation | Essential for measuring extracellular metabolite uptake/secretion rates (to set exchange bounds) and for 13C-MFA data acquisition. |

| Defined Culture Media | Experimental Reagent | Chemically defined media (e.g., M9, DMEM) are necessary to precisely set nutrient availability constraints in the in silico model for accurate simulation. |

Flux Balance Analysis (FBA) is a cornerstone constraint-based modeling approach for simulating genome-scale metabolic networks. The reliability and confidence of its predictions are fundamentally contingent on the validity of three key biological assumptions: Steady-State, Mass Conservation, and Optimality. This whitepaper provides an in-depth technical examination of these assumptions, detailing their mathematical formulations, experimental validation protocols, and implications for model confidence, particularly in bioprocessing and drug target identification.

The Steady-State Assumption

Core Principle

The steady-state (or homeostasis) assumption posits that intracellular metabolite concentrations remain constant over time, implying that the net sum of all production and consumption fluxes for any metabolite is zero. This simplifies the dynamic system of differential equations to a linear algebraic system.

Mathematical Formulation:

S • v = 0

where S is the stoichiometric matrix (m x n) and v is the flux vector (n x 1).

Quantitative Validation & Confidence Metrics

Experimental validation involves measuring metabolite pool sizes under perturbed and unperturbed conditions. Recent LC-MS/MS-based metabolomics studies provide the following quantitative insights:

Table 1: Experimental Metabolite Pool Stability in E. coli (Glucose-Limited Chemostat)

| Metabolite Class | Avg. Coefficient of Variation (CV) | Time-Scale of Measurement (min) | Technique | Key Finding |

|---|---|---|---|---|

| Central Carbon (e.g., G6P, F6P) | 8-12% | 30 | LC-MS/MS | Pools stable under constant environment |

| Energy Carriers (ATP, ADP) | 15-20% | 5 | Rapid Sampling + LC-MS/MS | Higher turnover but net pool stable |

| Amino Acid Pools | 10-25% | 60 | GC-MS | Variation depends on biosynthesis rate |

Detailed Experimental Protocol: Metabolite Time-Course Analysis

Aim: To validate the steady-state assumption for core metabolites. Protocol:

- Culture: Grow model organism (e.g., E. coli MG1655) in a controlled bioreactor under defined conditions (e.g., M9 minimal media, 0.2% glucose, D=0.1 h⁻¹).

- Perturbation: Introduce a sudden perturbation (e.g., pulse of 0.1% additional glucose or shift to anaerobic conditions).

- Rapid Sampling: Using a quenching device (e.g., -40°C methanol-buffer), take samples at high frequency (every 5-15 seconds for 2 minutes, then every minute for 30 minutes).

- Metabolite Extraction: Use cold methanol/chloroform/water extraction.

- Analysis: Quantify intracellular metabolites via targeted LC-MS/MS (e.g., QTRAP system).

- Data Processing: Normalize to cell density and internal standards. Plot concentration vs. time. Calculate time to return to baseline (±10% of pre-perturbation level).

Visualizing the Steady-State Concept

Steady-State Network Flux Balance

The Mass Conservation Assumption

Core Principle

This assumption asserts that total mass is neither created nor destroyed within the biochemical network. It is enforced through balanced stoichiometric coefficients for each element (C, H, O, N, P, S) in every reaction.

Quantitative Data on Stoichiometric Accuracy

Modern genome annotation and biochemical databases have improved mass balance closure rates.

Table 2: Mass Balance Closure in Public Metabolic Reconstructions

| Model Organism | Reconstruction (Version) | % Reactions Elementally Balanced (C,H,O,N) | Common Unbalanced Reaction Types |

|---|---|---|---|

| Homo sapiens | Recon3D (2018) | 96.7% | Transport, exchange, poorly characterized |

| Escherichia coli | iJO1366 (2017) | 99.1% | Prosthetic group biosynthesis |

| Saccharomyces cerevisiae | Yeast8 (2021) | 98.3% | Lipid and cofactor reactions |

| Generic | BiGG Models (2023) | >99% (curated core) | - |

Detailed Experimental Protocol: Isotopic Tracer Mass Balance

Aim: To empirically verify mass conservation for a defined pathway. Protocol:

- Tracer Preparation: Use 100% U-¹³C-labeled glucose as sole carbon source.

- Cultivation: Grow cells in a controlled chemostat to steady-state.

- Sampling & Analysis: Harvest cells and medium.

- Analyze extracellular metabolites (HPLC for organic acids, NMR for alcohols).

- Analyze intracellular metabolites and biomass components (Amino Acids, Lipids) via GC-MS after derivation.

- Mass Balance Calculation:

- Sum all ¹³C atoms in secreted products (e.g., acetate, lactate, CO₂).

- Sum all ¹³C atoms incorporated into biomass (measured from hydrolyzed protein, lipid fractions).

- Compare total ¹³C output (products + biomass) to ¹³C input (labeled glucose consumed). Closure within 95-105% supports mass conservation.

Visualizing Mass Conservation in a Reaction

Elemental Mass Balance in Glycolysis

The Biological Optimality Assumption

Core Principle

FBA typically requires an optimality assumption (e.g., maximization of biomass yield, minimization of ATP expenditure) to solve the underdetermined flux system. This is a hypothesis about cellular fitness objectives.

Quantitative Support and Limitations

Experimental evolution and omics data provide mixed validation depending on context.

Table 3: Validation of Optimality Assumptions in Different Contexts

| Optimality Objective | Experimental Support (Organism) | Correlation (r) Predicted vs. Measured Flux | Key Limiting Condition |

|---|---|---|---|

| Biomass Maximization | E. coli (aerobic, excess glucose) | 0.85-0.92 (¹³C-MFA) | Nutrient limitation, stress |

| ATP Minimization | S. cerevisiae (chemostat, low yield) | 0.75-0.80 | Rapid growth phases |

| Substrate Uptake Minimization | M. tuberculosis (hypoxia) | 0.70-0.78 | Active immune response environment |

Detailed Experimental Protocol: Adaptive Laboratory Evolution (ALE) for Objective Validation

Aim: To test if cells evolve toward a predicted optimal state. Protocol:

- In Silico Prediction: Using a genome-scale model (GEM), predict the set of fluxes that maximize biomass yield (

v_opt) under defined environmental constraints. - Evolution Experiment: Initiate parallel serial batch cultures or chemostats of the wild-type strain under the defined conditions for 500+ generations.

- Phenotypic Monitoring: Periodically measure key phenotypes: growth rate, substrate uptake rate, and byproduct secretion rates.

- Endpoint Analysis: Sequence endpoint clones to identify mutations. Perform ¹³C Metabolic Flux Analysis (¹³C-MFA) to obtain in vivo flux maps (

v_exp). - Comparison: Calculate the correlation between

v_expandv_opt. High correlation supports the optimality objective for that environment.

Visualizing Optimality in FBA Solution Space

Optimality Objective Constrains FBA Solutions

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Tools for Validating Core FBA Assumptions

| Reagent / Material | Function in Validation | Example Product / Specification |

|---|---|---|

| U-¹³C Labeled Substrates | Enables precise tracking of carbon fate for mass balance and flux (¹³C-MFA) studies. | >99% U-¹³C Glucose (Cambridge Isotope Labs, CLM-1396) |

| Rapid Sampling Quenching Devices | Instantly halts metabolism to capture in vivo metabolite concentrations for steady-state checks. | Fast-Filtration Kit or -40°C Methanol Quench System. |

| Stable Isotope Analysis Software | Interprets complex MS/NMR data to calculate fluxes and mass balances. | IsoCor2, INCA, OpenFlux. |

| Curated Genome-Scale Model (GEM) | Provides the stoichiometric (S) matrix to test mass conservation and run FBA. | BiGG Database model (e.g., iML1515), AGORA for microbiomes. |

| Constraint-Based Modeling Software | Solves FBA problems and tests optimality predictions. | COBRA Toolbox (MATLAB), cobrapy (Python). |

| Chemostat Bioreactor | Maintains constant, steady-state cell physiology for controlled experiments. | DASGIP or Sartorius Biostat system with precise pH/DO/temp control. |

The confidence in any FBA prediction is directly proportional to the biological validity of these three assumptions in the specific context being modeled. For example, model reliability is highest for microbial growth in nutrient-rich, constant environments where all three assumptions hold well. Confidence decreases when modeling complex mammalian systems (where steady-state is tissue-specific), diseased states (where optimality objectives may shift), or dynamic perturbations. Ongoing research in FBA confidence estimation focuses on quantifying the uncertainty propagated from violations of these assumptions, using methods like Flux Variance Analysis (FVA) and multi-objective optimization to provide probabilistic rather than single-point flux predictions, thereby creating more reliable models for drug development and metabolic engineering.

Within the broader research on Flux Balance Analysis (FBA) model reliability and confidence estimation, the precise identification and quantification of uncertainty sources is paramount. This technical guide systematically categorizes the primary origins of error during FBA model construction, ranging from genomic annotation to experimental integration. For drug development professionals, these uncertainties directly impact the predictive validity of in silico models for target identification and metabolic engineering.

Genomic and Annotation-Derived Uncertainty

The foundation of any genome-scale metabolic model (GEM) is the genome annotation. Errors here propagate throughout the model reconstruction pipeline.

- Gene Function Misannotation: Homology-based predictions can assign incorrect Enzyme Commission (EC) numbers.

- Gap Filling and Network Completion: Automated algorithms may introduce thermodynamically infeasible or biologically irrelevant reactions to achieve network connectivity.

- Isozyme and Subunit Uncertainty: Ambiguity in protein complex stoichiometry and isozyme presence.

- Localization Misassignment: Incorrect compartmentalization of reactions.

Experimental Protocols for Curation & Validation:

Protocol: Comparative Genomics and Manual Curation for Annotation Refinement

- Data Acquisition: Gather annotated genome sequence from primary databases (e.g., NCBI RefSeq) and relevant organism-specific databases.

- Homology Analysis: Perform BLASTp searches against a high-quality, manually curated database (e.g., Swiss-Prot) for all open reading frames. Use stringent e-value thresholds (<1e-30).

- Consensus EC Assignment: Assign EC numbers only when homology, conserved domain databases (CDD), and literature evidence concur. Flag discrepancies.

- Gap Analysis: Run a flux variability analysis (FVA) on a minimal glucose medium to identify blocked reactions. Use a consensus of genomic context, phylogenetic profiles, and biochemical literature to propose missing reactions over automated gap-filling alone.

- Compartmentalization: Integrate data from localization prediction tools (e.g., TargetP, PSORTb) with proteomic studies and literature.

Title: Annotation Curation Reduces Model Uncertainty

Stoichiometric and Thermodynamic Uncertainty

The quantitative core of the FBA model—the Stoichiometric matrix (S)—contains embedded assumptions with associated error.

Reaction Stoichiometry:

- Coefficient Errors: Incorrect proton/water stoichiometry, assumption of integer coefficients for poorly characterized reactions.

- Directionality Assignment: Assigning reversible/irreversible status based on incomplete thermodynamic data.

Table 1: Sources and Impact of Stoichiometric Uncertainty

| Source | Typical Magnitude of Error | Primary Impact on FBA Solution |

|---|---|---|

| Proton Stoichiometry (pH-dependent) | ±1 H⁺ per reaction | Alters ATP yield, redox balance, prediction of overflow metabolism |

| Biomass Composition | 5-15% variation in macromolecular fractions | Major impact on predicted growth rate and nutrient uptake |

| Cofactor Coupling (ATP, NAD(P)H) | Misassignment in 3-5% of reactions | Skews energy and redox balance, pathway flux distribution |

| Transport Reaction Stoichiometry | Often assumed (symport/antiport) | Affects ion gradient calculations and membrane energetics |

Experimental Protocol:

Protocol: Determining Reaction Gibbs Free Energy (ΔrG') for Directionality

- Component Contribution Method (Flamholz et al., 2012):

- Input: Gather measured or estimated standard Gibbs free energy of formation (ΔfG'°) for all metabolites in a reaction.

- Calculation: Use the linear regression-based component contribution method to estimate ΔfG'° for metabolites lacking data.

- Adjustment: Calculate ΔrG'° = Σ(stoichiometric coefficient * ΔfG'°(products)) - Σ(coefficient * ΔfG'°(reactants)).

- In Vivo Correction: Adjust to in vivo conditions: ΔrG' = ΔrG'° + RT * ln(Q), where Q is the reaction quotient. Use physiologically relevant ranges for metabolite concentrations (from metabolomics) and pH.

- Assignment: If ΔrG' << 0 (e.g., < -5 kJ/mol) across physiological conditions, assign as irreversible in the forward direction.

Objective Function and Environmental Parameter Uncertainty

The model's predictive output is exquisitely sensitive to the definition of the objective and boundary conditions.

Objective Function Formulation:

The canonical biomass objective function (BOF) is a major uncertainty source.

Table 2: Biomass Objective Function Components and Data Sources

| Biomass Component | Typical Data Source | Key Uncertainty |

|---|---|---|

| Protein | Omics (proteomics) & Literature | Composition varies with growth rate and condition |

| RNA/DNA | Literature measurements | Nucleotide ratios and total content |

| Lipids | Lipidomics & Literature | Fatty acid chain length and saturation state |

| Cell Wall | Biochemical assays | Precursor stoichiometry (e.g., peptidoglycan) |

| Cofactors & Metabolites | Metabolomics | Pool sizes are condition-dependent |

Environmental Constraints (Uptake/Secretion Rates):

- Measured uptake rates (e.g., glucose, O₂) have experimental error (±5-10%).

- Unaccounted for substrate versatility or cryptic carbon sources.

Integration of Omics Data and Model Uncertainty

Integrating transcriptomic or proteomic data to create context-specific models (e.g., via GIMME, iMAT) introduces new layers of uncertainty.

Uncertainty Propagation:

- Threshold Selection: Binary "on/off" calls from continuous omics data are arbitrary.

- Enzyme Turnover Numbers (kcat): Poorly characterized, leading to errors in converting protein abundance to flux constraints (in E-Flux or GECKO approaches).

Title: Uncertainty Propagation in Omics Integration

Experimental Protocol:

Protocol: Generating a Condition-Specific Model using iMAT

- Input Preparation:

- Model: A high-quality, compartmentalized GEM in SBML format.

- Omics Data: Normalized transcriptomic or proteomic data (e.g., TPM, LFQ intensity) for the condition of interest.

- Thresholding: Determine "high" and "low" expression thresholds (e.g., top/bottom quartile, or using a consistent percentile across datasets).

- Reaction Activity Mapping: For each reaction, evaluate its associated Gene-Protein-Reaction (GPR) rule. If any associated gene is "highly expressed," the reaction is considered potentially active. If all associated genes are "lowly expressed," it is considered potentially inactive.

- iMAT Optimization: Formulate and solve a mixed-integer linear programming (MILP) problem to find a flux distribution that maximizes the number of reactions carrying flux that are potentially active, while minimizing flux through reactions that are potentially inactive, subject to stoichiometric (Sv=0) and thermodynamic (lb, ub) constraints.

- Model Extraction: The solution defines an active subnetwork. Extract this as a condition-specific model.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for FBA Model Construction and Curation

| Item / Solution | Function in Model Construction | Example / Note |

|---|---|---|

| ModelBorgifier | Integrates and reconciles multiple draft models into a consensus model. | Crucial for leveraging diverse annotation sources. |

| MEMOTE (Model Metrics) | Suite for standardized testing and quality assessment of genome-scale models. | Generates a snapshot of model completeness and consistency. |

| COBRA Toolbox / PyCOBRA | MATLAB/Python software suites for constraint-based reconstruction and analysis. | Core environment for simulation, gap-filling, and omics integration. |

| Model SEED / RAST | Web-based platforms for automated draft model generation from genomes. | Provides initial draft; requires extensive manual curation. |

| CarveMe | Automated reconstruction tool using a universal model template and curated databases. | Generates transport-consistent, compartmentalized draft models. |

| BIGG Models Database | Repository of high-quality, curated genome-scale metabolic models. | Source of validated reaction biochemistry and BOFs for related organisms. |

| equilibrator-api | Web-based and programmatic tool for calculating reaction thermodynamics (ΔrG'). | Informs reaction directionality assignment. |

| EC (Enzyme Commission) Number Database | Definitive resource for enzyme function classification. | Critical for accurate reaction annotation from genetic data. |

The Critical Role of Confidence Estimation in Predictive Biology and Translational Research

The reliability of predictive biological models, particularly Flux Balance Analysis (FBA) models in systems biology, is paramount for successful translation to therapeutic discovery. This whitepaper argues that without rigorous, quantitative confidence estimation, model predictions remain speculative, hindering their utility in high-stakes drug development. Our broader thesis posits that integrating confidence metrics directly into FBA and other in silico modeling frameworks transforms them from exploratory tools into validated instruments for decision-making, thereby de-risking the translational pipeline.

Foundational Concepts: Uncertainty in Predictive Biology

Predictive models in biology, from kinetic models to genome-scale metabolic reconstructions, are inherently uncertain. Key sources of uncertainty include:

- Parametric Uncertainty: Variability in kinetic constants, binding affinities, and thermodynamic parameters.

- Structural Uncertainty: Gaps in pathway knowledge, incorrect network topology, or missing regulatory interactions.

- Contextual Uncertainty: Cell-type specificity, media conditions, and disease state heterogeneity that models fail to capture.

- Algorithmic/Mathematical Uncertainty: Limitations in solver algorithms, objective function formulation, and solution space degeneracy in FBA.

Confidence estimation provides a framework to quantify these uncertainties, producing not just a prediction (e.g., an essential gene, a predicted growth rate) but a measure of its reliability (e.g., a confidence interval, a posterior probability).

Methodologies for Confidence Estimation in FBA Models

Ensemble Modeling and Sampling

This approach addresses solution space degeneracy in FBA, where multiple flux distributions can achieve the same optimal objective value.

Experimental Protocol:

- Model Formulation: Begin with a genome-scale metabolic reconstruction (e.g., Recon3D, Human1).

- Objective Definition: Set a biologically relevant objective (e.g., maximize biomass production).

- Constraint Definition: Apply relevant constraints (e.g., uptake rates from experimental data).

- Solution Space Sampling: Use a Markov Chain Monte Carlo (MCMC) algorithm (e.g., the

optGpSamplerorCHRRsampler) to uniformly sample the space of feasible flux distributions. - Analysis: Calculate the mean, variance, and credible intervals (e.g., 95%) for each reaction flux across the sampled ensemble.

Key Quantitative Data from Recent Studies:

Table 1: Impact of Ensemble Sampling on Gene Essentiality Predictions in Cancer Cell Lines

| Cell Line (Model) | # Genes Predicted Essential (Single Solution) | # Genes with <95% Confidence (Ensemble) | % Reduction in High-Confidence Calls | Key Reference |

|---|---|---|---|---|

| MCF-7 (Recon3D) | 352 | 189 | 46.3% | (Lewis et al., 2024) |

| A549 (Human1) | 287 | 162 | 43.6% | (Sahoo et al., 2023) |

| HEK293 (iMM1865) | 198 | 121 | 38.9% | (Zhang & Palsson, 2023) |

Bayesian Integration of Omics Data

This method quantifies how confidence in model predictions changes upon integration of new experimental evidence (e.g., transcriptomics, proteomics).

Experimental Protocol:

- Prior Distribution: Define a prior probability distribution over model parameters (e.g., enzyme turnover numbers

k_cat) based on literature. - Likelihood Function: Construct a function that evaluates the probability of observing the new omics data given a specific set of model parameters.

- Posterior Calculation: Apply Bayes' theorem to compute the posterior distribution of parameters. This is often done via Approximate Bayesian Computation (ABC) or variational inference due to model complexity.

- Prediction with Confidence: Generate predictions from the model using parameters drawn from the posterior distribution, yielding a distribution of predictions from which confidence intervals are derived.

Diagram 1: Bayesian framework for model confidence.

Sensitivity Analysis for Translational Endpoints

Used to assess how uncertainty in input parameters propagates to uncertainty in key translational outputs, such as predicted drug synergy or off-target metabolic effects.

Experimental Protocol:

- Identify Key Inputs: Select uncertain input parameters (e.g., nutrient uptake bounds, ATP maintenance cost).

- Define Output Metric: Choose a translational output (e.g., predicted inhibition efficacy of a drug targeting metabolic enzyme

E). - Perturbation: Systematically vary each input parameter within its plausible range (based on experimental error).

- Global Sensitivity Analysis: Use methods like Sobol indices or Morris screening to quantify the fractional contribution of each input's uncertainty to the variance of the output metric.

- Report: Present predictions with confidence intervals directly tied to measurable input uncertainties.

Table 2: Sensitivity Analysis of Anticancer Target Prediction in a Glioblastoma Model

| Target Enzyme | Predicted % Growth Inhibition (Nominal) | 95% CI (from Parameter Uncertainty) | Key Sensitive Parameter | Sobol Index (S1) |

|---|---|---|---|---|

| PKM2 | 72.5% | [58.1%, 81.3%] | Oxygen Uptake Rate | 0.41 |

| IDH1 | 65.2% | [42.7%, 70.8%] | 2-HG Export Bound | 0.68 |

| MCT4 | 48.8% | [30.5%, 75.1%] | Lactate Uptake Bound | 0.55 |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Tools for Confidence Estimation Research

| Item | Function in Confidence Estimation | Example Product/Software |

|---|---|---|

| Metabolic Model Sampler | Generates ensembles of flux solutions to assess degeneracy. | optGpSampler (MATLAB), CobraPy.sampling (Python) |

| Bayesian Inference Library | Facilitates parameter estimation and uncertainty quantification. | PyMC3 or Stan (Probabilistic Programming) |

| Sensitivity Analysis Tool | Quantifies output variance from input uncertainty. | SALib (Python Sensitivity Analysis Library) |

| Constraint Curation Database | Provides experimentally-measured bounds for model constraints with associated error ranges. | BRENDA (Enzyme Kinetics), MetaNetX (Model Reconciliation) |

| High-Performance Computing (HPC) Cluster | Enables computationally intensive sampling and ensemble simulations. | Cloud-based (AWS, GCP) or local SLURM cluster. |

| Benchmarking Dataset | Experimental data with replicates for validating confidence intervals. | PRIDE (Proteomics), GEO (Transcriptomics), CEO (Metabolomics) |

Translational Application: De-risking Drug Development

A confidence-aware workflow directly impacts preclinical research:

Diagram 2: Confidence-driven translational workflow.

Case Study: Predicting combination therapy for antibiotic-resistant Pseudomonas aeruginosa. An ensemble FBA model, when constrained with patient-derived metabolomics data, predicted a high-confidence synthetic lethal interaction between inhibition of the folate pathway and an alternate dihydroorotate dehydrogenase. In vitro validation showed a 100-fold increase in efficacy compared to single-agent predictions made without confidence assessment, where the interaction was missed due to solution degeneracy.

Integrating robust confidence estimation into predictive biology is no longer optional for translational success. It transforms model outputs from point estimates into statistically rigorous predictions that can be rationally acted upon. Future research must focus on:

- Developing standardized confidence reporting metrics for biological models.

- Creating efficient algorithms for ultra-large-scale ensemble analyses.

- Tightly coupling confidence estimation pipelines with high-throughput experimental validation platforms.

By adopting these practices, researchers and drug developers can significantly de-risk the path from in silico discovery to in vivo therapeutic outcome.

Current Challenges and the Evolving Landscape of Constraint-Based Modeling

Constraint-Based Reconstruction and Analysis (COBRA) has become a cornerstone of systems biology, enabling the genome-scale simulation of metabolic networks. Framed within a broader thesis on Flux Balance Analysis (FBA) model reliability and confidence estimation, this guide examines the pressing challenges and emerging frontiers in the field. As models are increasingly applied in metabolic engineering and drug target discovery, quantifying their predictive confidence is paramount.

Core Challenges in Model Reliability

The reliability of an FBA prediction hinges on the quality of the underlying Genome-Scale Metabolic Model (GEM). Key challenges are quantified below.

Table 1: Quantitative Summary of Primary Model Challenges

| Challenge | Typical Impact on Model | Common Metric for Assessment |

|---|---|---|

| Gap Filling & Incomplete Annotations | 10-30% of reactions may be knowledge-gaps or non-gene-associated. | Comparison to KEGG/MetaCyc coverage; GapFind/GapFill success rate. |

| Compartmentalization Errors | Misassignment affects ~5-15% of reactions in eukaryotic models. | Consistency of metabolite charge and formula across compartments. |

| Stoichiometric & Charge Imbalance | Present in 1-5% of reactions in public models pre-curation. | Network-consistent metabolite formula (e.g., using MetaNetX/Web). |

| Uncertainty in Biomass Objective Function | Variations can change predicted growth rates by >20%. | Sensitivity analysis of biomass composition coefficients. |

| Context-Specificity | Generic models fail to predict >40% of tissue-specific fluxes. | Comparison to transcriptomic/proteomic data (MCC >0.6 desired). |

Methodologies for Confidence Estimation

Robust protocols are essential for estimating the confidence of model predictions.

Protocol 1: Systematic Model Validation Using Multi-Omics Data

- Model Curation: Start with a consensus model (e.g., Recon, Human1). Use tools like

MEMOTEfor initial quality assessment. - Data Integration: Acquire context-specific transcriptomic, proteomic, and/or exo-metabolomic data. Normalize and log-transform omics data.

- Model Contextualization: Apply a regularization method like

FASTCOREorINITto generate a tissue-specific model. Alternatively, useGIMMEoriMATwith transcriptomic data to constrain reaction bounds. - Phenotype Prediction: Perform FBA on the contextualized model to predict growth rates, substrate uptake, or byproduct secretion.

- Validation & Confidence Scoring: Compare predictions to experimentally measured fluxes (e.g., from 13C-MFA). Calculate a weighted confidence score (C) for each prediction:

C = w1*(MCC of omics integration) + w2*(1 - RMSE of flux prediction).

Protocol 2: Assessing Prediction Robustness to Parameter Uncertainty

- Define Parameter Distributions: For uncertain parameters (e.g., ATP maintenance cost, kinetic constants in enzyme-constrained models), define plausible probability distributions based on literature.

- Monte Carlo Sampling: Perform

n(e.g., 1000) iterations of sampling parameters from their distributions. - Ensemble Simulation: Run FBA (or related method) for each parameter set to generate a distribution of predicted fluxes for a reaction of interest.

- Confience Interval Calculation: Compute the 95% flux range for each reaction. A narrow range indicates high confidence in the prediction despite parametric uncertainty.

The Evolving Landscape: Integration and Expansion

The field is moving beyond static metabolic networks toward integrated, multi-scale models.

Table 2: Emerging Methodologies and Their Applications

| Methodology | Core Principle | Key Tool/Algorithm | Application in Drug Development |

|---|---|---|---|

| Enzyme-Constrained Modeling | Incorporates kinetic limits (kcat) into FBA. | GECKO, ECM |

Predict more accurate gene essentiality and antibiotic targets. |

| Metabolite-Enzyme Integration | Links metabolite levels to enzyme activity via thermodynamics. | ETFL (Ensemble) |

Identify vulnerabilities via metabolite-enzyme co-regulation. |

| Machine Learning Enhancement | Uses ML to predict kinetic parameters or fill knowledge gaps. | DL4Microbiology, Chassys |

Prioritize experimental characterization of orphan enzymes. |

| Whole-Cell Modeling | Integrates metabolism with transcription, translation. | WCM frameworks |

Simulate full-cell response to drug perturbations. |

Title: Model Contextualization and Validation Workflow

Title: The Constraint-Based Modeling Paradigm

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Advanced COBRA Studies

| Item | Function & Application |

|---|---|

| Consensus Metabolic Models (e.g., Human1, Recon3D) | High-quality, community-vetted starting point for building context-specific models. |

| COBRA Toolbox (MATLAB) | Primary software suite for performing FBA, sampling, and basic model manipulation. |

| COBRApy (Python) | Python implementation enabling pipeline integration, machine learning, and large-scale analyses. |

| MEMOTE (Model Testing) | Automated test suite for assessing model quality, stoichiometric consistency, and annotation. |

| MetaNetX / BiGG Models | Databases for reconciling metabolite/reaction identifiers across models, crucial for merging. |

| 13C-Labeled Substrates (e.g., [U-13C] Glucose) | Experimental reagents for 13C Metabolic Flux Analysis (MFA), the gold standard for in vivo flux validation. |

| FastQC / MultiQC | For quality control of omics data (RNA-Seq) prior to integration into models. |

| soplex / Gurobi Optimizer | Linear Programming (LP) and Mixed-Integer Linear Programming (MILP) solvers used as the computational engine for FBA. |

Advanced Methods for FBA Confidence Estimation: Techniques, Tools, and Real-World Applications

Flux Variability Analysis (FVA) for Assessing Solution Space Robustness

Flux Balance Analysis (FBA) has become a cornerstone of constraint-based metabolic modeling. However, a fundamental critique of standard FBA is its identification of a single, optimal flux distribution, which often represents just one point within a potentially vast space of equivalent optimal states. This limitation undermines the reliability of predictions for biological engineering and drug target identification. This whitepaper frames Flux Variability Analysis (FVA) as an essential methodology within broader research into FBA model reliability and confidence estimation. FVA quantifies the range of possible fluxes for each reaction while maintaining a near-optimal objective value, thereby assessing the robustness and flexibility of the metabolic network's solution space.

Core Principles and Mathematical Formulation

FVA computes the minimum and maximum possible flux ( v_i ) for every reaction in the network, subject to constraints that the system must satisfy a given objective function value (e.g., growth rate) within a specified tolerance ( ϵ ).

The standard FBA problem is: Maximize c^T v subject to S v = 0, and lb ≤ v ≤ ub.

Let Z = c^T v be the optimal objective value from FBA. FVA then solves two Linear Programming (LP) problems for each reaction i:

- Minimize v_i

- Maximize v_i subject to: S v = 0 lb ≤ v ≤ ub c^T v ≥ Z - ϵ|Z| (for a given fraction ϵ, typically 0.01-0.10)

The result is a range [ v_i,min , v_i,max ] for each reaction, defining the solution space boundary.

Key Methodological Protocols

Protocol 3.1: Standard FVA Execution

Objective: Determine the full flux variability profile of a metabolic model.

- Model Curation: Load a genome-scale metabolic reconstruction (e.g., in SBML format).

- Environmental Constraints: Define medium composition by setting exchange reaction bounds.

- Baseline FBA: Solve for the optimal objective (e.g., biomass) flux Z.

- Tolerance Parameter Definition: Set optimality tolerance ϵ (e.g., 0.01 for 99% optimality).

- Flux Range Calculation: For each reaction i in the model: a. Solve LP: minimize v_i, subject to S v = 0, lb ≤ v ≤ ub, and c^T v ≥ Z - ϵ|Z|. Store result as v_i,min. b. Solve LP: maximize v_i, subject to the same constraints. Store result as v_i,max.

- Output: A list of reactions with their min/max flux values.

Protocol 3.2: FVA for Robustness Assessment of Drug Targets

Objective: Identify metabolic reactions whose inhibition is guaranteed to reduce biomass production.

- Wild-type FVA: Perform standard FVA (Protocol 3.1) on the unperturbed model.

- Gene/Reaction Knockout: Modify the model to simulate deletion (set lb and ub of target reaction to zero).

- Mutant FBA: Compute new optimal objective Z_ko.

- Mutant FVA: Perform FVA on the knockout model with tolerance ϵ.

- Comparison Analysis: Compare the flux ranges of key reactions (especially biomass precursors) between wild-type and mutant. A robust target will show a significant and mandatory reduction in the maximum producible flux of essential precursors.

Table 1: Representative FVA Output for Core Metabolic Reactions in E. coli iJO1366 Model (Glucose Minimal Medium, 99% Optimality)

| Reaction ID | Reaction Name | Min Flux (mmol/gDW/h) | Max Flux (mmol/gDW/h) | Variability (Max-Min) | Essential (Knockout FVA) |

|---|---|---|---|---|---|

| PFK | Phosphofructokinase | 8.3 | 12.1 | 3.8 | Yes |

| PGI | Glucose-6-phosphate isomerase | -4.2 | 10.5 | 14.7 | No |

| GLCpts | Glucose transport | 10.0 | 10.0 | 0.0 | Yes |

| BIOMASSEciJO1366core53p95M | Biomass production | 0.85 | 0.86 | 0.01 | N/A |

| ACKr | Acetate kinase reversibility | -2.5 | 5.1 | 7.6 | No |

Table 2: Impact of Optimality Tolerance (ϵ) on Solution Space Volume

| Tolerance (ϵ) | Allowed Objective (% of max) | Avg. Flux Range (mmol/gDW/h) | % of Reactions with Non-zero Range | Computational Time (s)* |

|---|---|---|---|---|

| 0.00 (Strict) | 100% | 0.15 | 12% | 45 |

| 0.01 | 99% | 1.87 | 45% | 47 |

| 0.05 | 95% | 3.42 | 68% | 48 |

| 0.10 | 90% | 5.11 | 82% | 50 |

*Benchmarked on a standard desktop PC for a model with ~2000 reactions.

Visualizations

FVA Computational Workflow

FBA Point vs FVA Solution Space

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Resources for FVA Implementation and Analysis

| Item Name | Function/Description | Example/Format |

|---|---|---|

| Genome-Scale Model (GEM) | A structured, mathematical representation of an organism's metabolism. The foundational input for FVA. | SBML file (e.g., Human1, iJO1366, Yeast8) |

| Constraint-Based Modeling Suite | Software providing functions for FBA, FVA, and model manipulation. | COBRA Toolbox (MATLAB), COBRApy (Python), CellNetAnalyzer |

| Linear Programming (LP) Solver | Computational engine to solve the optimization problems at the core of FBA and FVA. | Gurobi, CPLEX, GLPK, IBM ILOG |

| Optimality Tolerance (ϵ) Parameter | A numerical value defining the fraction of the optimal objective value allowed for alternative flux distributions. | Typically between 0.01 and 0.10 (1-10% sub-optimal) |

| Reaction Essentiality Database | Experimental data on gene/reaction knockouts for validating FVA-predicted robust targets. | Published literature, databases like OGEE or DEG |

| Flux Measurement Data (¹³C-MFA) | Experimental fluxomics data used to compare against FVA-computed flux ranges for confidence estimation. | Central carbon metabolism fluxes from isotopologue experiments |

Monte Carlo Sampling and Bayesian Approaches to Quantify Parameter Uncertainty

This whitepaper details advanced computational methods for parameter uncertainty quantification, framed within a broader research thesis on Flux Balance Analysis (FBA) model reliability and confidence estimation. Robust FBA predictions for metabolic engineering and drug target identification are contingent upon accurately characterizing the uncertainty inherent in kinetic and thermodynamic parameters. This guide presents Monte Carlo (MC) sampling and Bayesian inference as complementary frameworks to transition FBA from deterministic point estimates to probabilistic confidence intervals, thereby enhancing decision-making in bioprocess optimization and therapeutic development.

Theoretical Foundations

The Parameter Uncertainty Problem in FBA

Standard FBA solves a linear programming problem: Maximize: ( Z = c^T v ) Subject to: ( S \cdot v = 0, \quad v{min} \leq v \leq v{max} ) Uncertainty primarily resides in the flux bounds ((v{min}, v{max})), derived from often-noisy experimental measurements of enzyme kinetics or metabolite concentrations. This propagates to uncertainty in the predicted optimal flux distribution (v^*) and the objective (Z).

Monte Carlo Sampling Framework

MC methods treat uncertain parameters as random variables with defined probability distributions. By repeatedly sampling from these distributions and solving the resulting FBA instances, one constructs an empirical distribution of model outputs.

Bayesian Inference Framework

Bayesian methods refine parameter distributions by incorporating observational data (D) using Bayes' theorem: ( P(\theta | D) = \frac{P(D | \theta) P(\theta)}{P(D)} ) where ( \theta ) represents the uncertain parameters, ( P(\theta) ) is the prior distribution, ( P(D | \theta) ) is the likelihood, and ( P(\theta | D) ) is the posterior distribution quantifying updated parameter uncertainty.

Core Methodologies and Experimental Protocols

Protocol: Monte Carlo Sampling for FBA Flux Uncertainty

Objective: Quantify the uncertainty in FBA-predicted optimal growth rates and critical flux values due to uncertain uptake/secretion bounds. Procedure:

- Define Priors: For each uncertain exchange reaction bound ( b_i ), assign a probability distribution (e.g., Normal with mean from experimental data and standard deviation representing measurement error).

- Sample Parameter Space: Generate ( N ) (e.g., 10,000) independent random samples from the joint prior distribution of all ( b_i ).

- Solve Ensemble FBA: For each parameter sample ( k ), solve the FBA linear program with bounds set to the sampled values. Record the optimal objective ( Z^k ) and key internal fluxes ( v_j^k ).

- Post-process: Analyze the collection ( {Z^k, v_j^k} ) to compute statistics (mean, standard deviation, 95% credible intervals) and create kernel density estimates.

Table 1: Example MC Output for a Microbial Growth FBA Model

| Flux/Variable | Mean | Std. Dev. | 2.5% Percentile | 97.5% Percentile |

|---|---|---|---|---|

| Optimal Growth Rate (hr⁻¹) | 0.42 | 0.05 | 0.33 | 0.51 |

| Glucose Uptake (mmol/gDW/hr) | 8.7 | 1.2 | 6.5 | 11.1 |

| ATPase Flux (mmol/gDW/hr) | 15.3 | 2.1 | 11.5 | 19.4 |

| Succinate Secretion (mmol/gDW/hr) | 0.8 | 0.4 | 0.1 | 1.6 |

Protocol: Markov Chain Monte Carlo (MCMC) for Bayesian FBA

Objective: Infer posterior distributions of enzyme turnover numbers ((k_{cat})) by integrating FBA with metabolomic and fluxomic data. Procedure:

- Define Likelihood Model: Assume experimental measured fluxes ( v{exp} ) are normally distributed around FBA-predicted fluxes ( v{FBA}(\theta) ) with variance ( \sigma^2 ): ( P(v{exp} | \theta) = \mathcal{N}(v{FBA}(\theta), \sigma^2) ).

- Specify Priors: Set prior distributions for (k_{cat}) parameters (e.g., Log-Normal based on BRENDA database).

- Run MCMC: Use an algorithm (e.g., Metropolis-Hastings, Hamiltonian Monte Carlo) to draw samples from the posterior ( P(\theta | v_{exp}) ). The sampler proposes new parameter sets, evaluates the FBA solution, and accepts/rejects based on the likelihood and prior.

- Diagnose Convergence: Assess chain mixing and stationarity using the Gelman-Rubin statistic and trace plots.

- Interpret Posterior: Use the sampled posterior to identify well-constrained versus poorly-identified parameters and generate predictive intervals for unobserved fluxes.

Table 2: Bayesian Inference Results for Key Kinetic Parameters

| Enzyme (EC Number) | Prior Mean (log10) | Posterior Mean (log10) | Posterior Std. Dev. | 95% Credible Interval |

|---|---|---|---|---|

| Phosphofructokinase (2.7.1.11) | 2.30 (200 s⁻¹) | 2.15 | 0.12 | [1.92, 2.38] |

| Pyruvate Kinase (2.7.1.40) | 2.48 (300 s⁻¹) | 2.70 | 0.15 | [2.42, 3.00] |

| Isocitrate Dehydrogenase (1.1.1.42) | 1.90 (79 s⁻¹) | 2.25 | 0.20 | [1.88, 2.65] |

Visualizing Workflows and Relationships

Title: Monte Carlo Parameter Uncertainty Workflow

Title: Bayesian Inference Core Relationship

Title: MCMC Sampling Loop for Bayesian FBA

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Data Resources

| Item | Function/Description | Example/Resource |

|---|---|---|

| FBA Solver | Core LP/QP optimization engine for constraint-based models. | COBRApy (Python), Matlab COBRA Toolbox |

| MC Sampling Library | Generate pseudo-random samples from probability distributions. | NumPy (Python), Statistics Toolbox (Matlab) |

| MCMC Engine | Perform advanced Bayesian posterior sampling. | PyMC3/Stan (Python), JAGS (R) |

| Kinetic Parameter Database | Source for prior distributions on enzyme kinetic constants. | BRENDA, SABIO-RK |

| Metabolomic/Fluxomic Data | Observational data for constructing likelihood functions. | Public repositories (MetaboLights, EMP) |

| High-Performance Computing (HPC) | Parallelize thousands of FBA solves for MC/MCMC. | Cloud (AWS, GCP) or local cluster with SLURM |

| Visualization Suite | Analyze and plot high-dimensional parameter and flux distributions. | ArviZ (Python), ggplot2 (R), Matplotlib |

Within the broader research on Flux Balance Analysis (FBA) model reliability and confidence estimation, sensitivity analysis stands as a critical methodology. FBA predicts metabolic flux distributions by optimizing an objective function, subject to stoichiometric and thermodynamic constraints. The reliability of these predictions hinges on the accuracy of two core model components: the stoichiometric matrix (S) and the flux bounds (vmin, vmax). This technical guide provides an in-depth examination of sensitivity analysis techniques used to probe the impact of stoichiometric coefficients and flux bound assignments, thereby quantifying confidence in model predictions and guiding iterative model refinement.

The Mathematical Foundation: Why Sensitivity Matters

The canonical FBA problem is formulated as: Maximize: ( Z = c^T v ) Subject to: ( S \cdot v = b ), ( v{min} \leq v \leq v{max} )

The solution space is a convex polytope defined by the intersection of the null space of S and the hyperplanes of the bounds. Small perturbations in stoichiometric coefficients (elements of S) or in the boundary values can lead to significant changes in the optimal flux distribution, alternative optimal solutions, or even render the problem infeasible. Sensitivity analysis systematically evaluates this robustness.

Probing Stoichiometric Uncertainty

Stoichiometric coefficients are often derived from biochemical literature and may contain experimental error or be condition-specific.

Experimental Protocol: Monte Carlo Stoichiometric Sampling

- Define Probability Distributions: For each non-zero element ( S_{ij} ) in the stoichiometric matrix, assign a probability distribution (e.g., Gaussian with mean = nominal value, standard deviation = 5-10% of mean, truncated at physiological limits).

- Generate Perturbed Models: Perform N (e.g., 1000) iterations where a new matrix ( S_k ) is generated by sampling each coefficient from its defined distribution.

- Solve and Record: For each ( Sk ), solve the FBA problem. Record the objective value ( Zk ) and key reaction fluxes ( v_{key} ).

- Analyze Variance: Compute the coefficient of variation (CV) for ( Z ) and ( v_{key} ) across all iterations. High CV indicates high sensitivity to stoichiometric uncertainty.

Table 1: Example Output from Stoichiometric Sensitivity Analysis on a Core Metabolic Model

| Reaction Identifier | Nominal Flux (mmol/gDW/h) | Mean Flux (± Std Dev) | Coefficient of Variation (%) | Sensitive (CV > 15%) |

|---|---|---|---|---|

| Biomass_Reaction | 0.85 | 0.82 (± 0.09) | 11.0 | No |

| ATPM | 2.50 | 2.51 (± 0.15) | 6.0 | No |

| PFK | 3.20 | 3.10 (± 0.75) | 24.2 | Yes |

| PGI | 3.20 | 2.95 (± 0.90) | 30.5 | Yes |

| GND | 1.80 | 1.82 (± 0.10) | 5.5 | No |

Analyzing Flux Bound Impact

Flux bounds represent thermodynamic irreversibility, enzyme capacity, and substrate uptake rates. They are often estimated or measured with uncertainty.

Experimental Protocol: Flux Variability Analysis (FVA) with Bound Perturbation FVA determines the minimum and maximum possible flux for each reaction within the solution space while maintaining optimal (or near-optimal) objective function value.

- Baseline FVA: For each reaction ( vi ), solve:

- Maximize ( vi ), subject to ( S \cdot v = b, v{min} \leq v \leq v{max}, c^T v \geq \alpha Z{opt} ) where ( \alpha ) is typically 0.95-1.0.

- Minimize ( vi ) under the same constraints. The result is the range [( v{i,min}, v{i,max} )].

- Perturb Key Bounds: Identify transport or critical reaction bounds (e.g., glucose uptake, ( v_{GLU_max} )). Systematically vary this bound over a physiological range (e.g., 0-20 mmol/gDW/h).

- Measure Impact: At each perturbed bound value, re-run FVA for reactions of interest. Plot the flux range versus the perturbed bound to identify linear, threshold, or saturation responses.

Table 2: Sensitivity of Growth Rate to Perturbations in Key Flux Bounds

| Perturbed Bound | Nominal Value (mmol/gDW/h) | Perturbation Range Tested | % Change in Biomass Flux per 10% Change in Bound | Classification of Sensitivity |

|---|---|---|---|---|

| Glucose Uptake (vmax) | 10.0 | [0.0, 15.0] | +8.5% (0-10 mmol), +0.5% (>10 mmol) | High then Saturated |

| Oxygen Uptake (vmax) | 15.0 | [0.0, 20.0] | +4.2% | Moderate |

| ATP Maintenance (vmin) | 2.5 | [1.0, 4.0] | -3.1% | Low/Inverse |

| Lactate Export (vmax) | 1000.0 (unconstrained) | [0.0, 20.0] | 0.0% | Insensitive |

Integrated Workflow for Confidence Estimation

A robust sensitivity analysis protocol integrates both dimensions to identify the most influential parameters.

Workflow for Model Confidence Estimation

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in Sensitivity Analysis |

|---|---|

| COBRA Toolbox (MATLAB) | Primary software suite for running FBA, FVA, and implementing custom Monte Carlo sampling scripts for sensitivity analysis. |

| cobrapy (Python) | Python-based alternative to COBRA, enabling seamless integration with machine learning and data science libraries for analysis. |

| MC3 (Monte Carlo) | A specialized Python library for robust Markov Chain Monte Carlo sampling, useful for advanced Bayesian sensitivity analysis. |

| GRB/CPLEX Optimizers | Commercial solvers integrated with COBRA/cobrapy for fast, reliable solving of large-scale linear programming (FBA) problems. |

| Jupyter Notebooks | Interactive environment for documenting the entire sensitivity analysis workflow, ensuring reproducibility and collaboration. |

| SBML Model File | Standardized (Systems Biology Markup Language) file containing the model's stoichiometry, bounds, and annotations. |

| Parameter Sweep Database | A structured database (e.g., SQLite, HDF5) to store thousands of simulation outputs from Monte Carlo and bound perturbation runs. |

Visualizing Parameter Influence Networks

Sensitivity results can be mapped onto metabolic networks to identify fragile hubs.

Sensitive Reactions in Glycolysis

Systematic sensitivity analysis of stoichiometry and bounds transforms FBA from a static predictive tool into a framework for quantitative confidence estimation. By identifying which parameters most significantly impact predictions—such as the sensitive glycolytic reactions in Table 1 or the high-impact glucose bound in Table 2—researchers can strategically allocate experimental resources for parameter refinement. This process is fundamental to building reliable, actionable metabolic models for applications in systems biology and rational drug development, where understanding the limits of prediction is as important as the prediction itself.

Within the broader research on Flux Balance Analysis (FBA) model reliability and confidence estimation, the identification of high-confidence metabolic drug targets presents a critical challenge. Traditional target discovery often yields candidates with high in vitro efficacy but fails in clinical stages due to metabolic network flexibility, redundancy, and poor in vivo context. This whitepaper details a computational-experimental framework that integrates constrained genome-scale metabolic models (GSMMs) with multi-omics validation to assign confidence scores to potential metabolic targets, thereby derisking early-stage drug development.

Core Methodology: A Confidence Scoring Framework

The proposed framework quantifies target confidence through a multi-tiered scoring system, integrating in silico predictions with empirical evidence layers.

Table 1: Confidence Scoring Metrics for Metabolic Targets

| Metric Category | Specific Metric | Weight | Scoring Range | Description |

|---|---|---|---|---|

| Computational Essentiality | Synthetic Lethality (SL) Score | 0.25 | 0-10 | Derived from dual gene knockout simulations in context-specific GSMMs. |

| Flux Variability Range | 0.15 | 0-10 | Measures the potential of the network to bypass the reaction inhibition (low range = high confidence). | |

| Multi-omics Correlation | Transcript-Protein-Reaction (TPR) Concordance | 0.20 | 0-10 | Degree of agreement between gene expression, protein abundance, and predicted flux. |

| Metabolomic Disruption Index | 0.15 | 0-10 | Predicted change in downstream metabolite pools from metabolomic data integration. | |

| Experimental Validation | CRISPR-Cas9 Essentiality (DepMap) | 0.15 | 0-10 | Correlation with large-scale functional genomics knockout data in relevant cell lines. |

| Pharmacological Validation | 0.10 | 0-10 | Evidence from known inhibitors or chemical probes (e.g., from PubChem BioAssays). |

A final confidence score (0-100%) is calculated as the weighted sum. Targets scoring above 70% are considered "high-confidence."

Experimental Protocols for Validation

Protocol 1: Generating Context-Specific GSMMs for Target Prediction

- Input Data: RNA-Seq or proteomics data from diseased vs. healthy human tissues or relevant cell lines.

- Model Reconstruction: Use the Human1 or Recon3D model as a template. Apply the INIT or mCADRE algorithm to generate a tissue/cell-line-specific model.

- Constraint Integration: Integrate transcriptomic data via the E-Flux2 method or proteomic data via the GECKO toolbox to set reaction bounds.

- Simulation & Target Identification: Perform parsimonious FBA (pFBA) and Flux Variability Analysis (FVA). Identify candidate targets as reactions whose inhibition:

- Significantly reduces biomass/ virulence factor production (for pathogens).

- Induces synthetic lethality with a known disease mutation.

- Has minimal flux variability (network rigidity).

Protocol 2:In VitroFlux Validation via Stable Isotope Tracing

- Cell Culture: Culture target cell line in medium with (^{13}\text{C})-labeled glucose (e.g., [U-(^{13}\text{C})]glucose).

- Inhibition: Treat experimental arm with a specific inhibitor of the target enzyme; use DMSO vehicle for control.

- Metabolite Extraction: At harvest (e.g., 24h), use cold methanol:water (80:20) extraction.

- LC-MS Analysis: Analyze polar metabolites via liquid chromatography coupled to high-resolution mass spectrometry.

- Data Processing: Use software (e.g., Maven, XCMS) to quantify isotopologue distributions of key pathway metabolites (e.g., TCA cycle intermediates).

- Flux Inference: Compare labeling patterns between treated and control cells using software like INCA or Isotopomer Network Compartmental Analysis to confirm predicted flux alterations at the target node.

Visualizing the Workflow and Pathway Impact

Diagram 1: Computational target identification and scoring workflow.

Diagram 2: Example target inhibition in glycolysis and TCA cycle.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Target Identification & Validation

| Item | Function & Application | Example Product/Catalog |

|---|---|---|

| Human Genome-Scale Metabolic Model | Template for building context-specific models for in silico simulations. | Recon3D, Human1 (from Virtual Metabolic Human database). |

| Constraint-Based Modeling Software | Platform for FBA, FVA, and simulation of gene/reaction knockouts. | COBRA Toolbox (MATLAB), cobrapy (Python). |

| Stable Isotope-Labeled Substrate | Enables experimental flux measurement via isotopic tracing. | [U-(^{13})C]-Glucose (CLM-1396, Cambridge Isotope Laboratories). |

| Target-Specific Chemical Probe | For pharmacological validation of target essentiality in vitro. | Inhibitors from Selleckchem (e.g., specific kinase/DHFR inhibitors). |

| CRISPR/Cas9 Knockout Pool Library | For genome-wide functional validation of gene essentiality. | Brunello or Calabrese whole-genome knockout libraries (Addgene). |

| Metabolomics Analysis Software | Processes LC-MS data for isotopologue distribution and quantification. | Maven, XCMS Online, MetaboAnalyst. |

| Multi-Omics Integration Tool | Correlates transcriptomic/proteomic data with model constraints. | Omics Integrator, GECKO Toolbox. |

Integrating confidence estimation directly into the FBA-driven target discovery pipeline transforms metabolic targeting from a high-attrition gamble to a data-driven, quantitative discipline. By requiring candidates to demonstrate robustness across computational predictions, multi-omics correlations, and preliminary experimental validation, this framework significantly increases the probability of clinical success for novel metabolic drugs. Future work in this thesis will focus on refining confidence metrics using machine learning on historical success/failure data and incorporating single-cell omics for tumor subpopulation targeting.

The reliability of Flux Balance Analysis (FBA) models is a cornerstone of systems biology, particularly in pathogen research. These genome-scale metabolic reconstructions (GEMs) are used to predict gene essentiality, informing potential drug targets. However, predictions often vary between models of the same organism, leading to uncertainty. This case study situates itself within the broader thesis that quantifying confidence in FBA predictions is not merely supplementary but essential for translating in silico findings into viable drug development pipelines. We explore how multi-metric confidence scoring can be applied to essential gene predictions in pathogenic bacteria, enhancing model utility for researchers and pharmaceutical professionals.

Core Confidence Metrics: Definitions and Quantitative Benchmarks

Essential gene prediction confidence is derived from a confluence of metrics. The table below summarizes key quantitative indicators and their interpretative benchmarks.

Table 1: Core Confidence Metrics for Essential Gene Predictions

| Metric | Description | High-Confidence Range | Rationale |

|---|---|---|---|

| Flcon (Flux Consistency) | Proportion of sampled growth conditions where gene deletion yields zero growth. | > 0.95 | Indicates robust essentiality across diverse metabolic environments. |

| PEM (Predictive Enrichment Metric) | Statistical enrichment of predictions against a high-quality experimental gold standard dataset. | p-value < 0.01, Odds Ratio > 5 | Measures agreement with empirical data. |

| GapFill Dependency Score | Frequency of a reaction, associated with the gene, being added via model gap-filling. | < 0.1 | Lower scores suggest the gene's role is inherent to the reconstruction, not an artifact of curation. |

| Subsystem Ubiquity | Number of distinct metabolic subsystems the gene's associated reactions participate in. | Low (1-2) | Genes specific to a single, vital pathway (e.g., cell wall synthesis) are often more reliably predicted as essential. |

| Model Agreement Score | Consensus across multiple independent GEMs for the same organism. | > 0.8 | Mitigates bias from any single reconstruction methodology. |

Experimental Protocol: A Workflow for Confidence-Driven Prediction

This protocol outlines a standardized method for applying confidence metrics.

Protocol Title: Multi-Metric Confidence Scoring for In Silico Gene Essentiality Predictions.

Objective: To generate a high-confidence list of essential genes from a pathogen GEM.

Inputs: A genome-scale metabolic model (SBML format), a media condition definition file, and a curated experimental essentiality dataset (e.g., from transposon sequencing).

Step-by-Step Procedure:

- Model Curation & Simulation:

- Load the GEM. Simulate wild-type growth on the target media to establish a baseline growth rate.

- Perform in silico single-gene knockout simulations for all metabolic genes using parsimonious FBA or similar constraint-based method.

- Classify a gene as "predicted essential" if the knockout model's growth rate is <5% of the wild-type rate.

Flux Consistency (Flcon) Calculation:

- Define a set of 100+ biologically relevant media conditions (varying carbon, nitrogen, oxygen sources).

- Re-run the knockout simulation for each predicted essential gene across all conditions.

- Calculate Flcon = (Number of conditions with zero growth) / (Total conditions tested).

Benchmarking Against Experimental Data:

- Compare predictions to a gold-standard experimental dataset (e.g., large-scale mutagenesis).

- Calculate the PEM using a Fisher's Exact Test on a 2x2 contingency table (Predicted vs. Experimental Essentiality).

GapFill and Functional Analysis:

- Parse the model reconstruction history to flag reactions added via gap-filling. For each gene, calculate the GapFill Dependency Score.

- Map genes to metabolic subsystems using model annotations.

Consensus Scoring (if multiple models exist):

- Repeat steps 1-3 for all available GEMs of the pathogen.

- For each gene, calculate the Model Agreement Score as the proportion of models predicting it as essential.

Integrated Confidence Assignment:

- Assign each predicted essential gene a composite confidence tier:

- Tier 1 (High): Flcon > 0.95, PEM p-value < 0.01, GapFill Score < 0.1, and Model Agreement > 0.8 (or present in single model).

- Tier 2 (Medium): Meets 2-3 of the Tier 1 criteria.

- Tier 3 (Low): Meets 0-1 of the Tier 1 criteria.

- Assign each predicted essential gene a composite confidence tier:

Output: A ranked list of essential genes with associated confidence metrics and tier classification.

Visualizing the Workflow and Metabolic Impact

Title: Workflow for Confidence-Based Essential Gene Prediction

Title: Targeting a High-Confidence Essential Gene in a Pathway

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools and Reagents for Validation of Predicted Essential Genes

| Item | Function/Description | Application in Validation |

|---|---|---|

| Conditional Knockdown System (e.g., CRISPRi) | Enables titratable repression of target gene expression in vivo. | Validates essentiality without complete knockout, allowing study of fitness defects. |

| Transposon Mutagenesis Library (e.g., Tn-seq) | Genome-wide library of random insertions for high-throughput fitness profiling. | Provides experimental gold-standard data for benchmarking in silico predictions (PEM calculation). |

| Defined Minimal Media Kits | Chemically defined media with specific nutrient compositions. | Used in vitro to test condition-specific essentiality predictions, informing Flcon simulations. |

| Metabolite Standards (UPLC/MS Grade) | Quantitative standards for intracellular metabolites. | Measure flux changes or metabolite pool depletion following gene knockdown, confirming metabolic role. |

| Pathogen-Specific Metabolite Extraction Buffer | Optimized for quenching metabolism and extracting polar/non-polar metabolites from the specific pathogen. | Ensures accurate metabolomic profiling during validation experiments. |

| Whole-Cell Lysis Reagent (for Western/ELISA) | Efficiently extracts proteins while maintaining epitope integrity. | Quantifies protein expression changes post-knockdown, linking genotype to phenotype. |

| Microplate-Based Growth Assay (Phenotype Microarray) | High-throughput measurement of growth under hundreds of conditions. | Empirically tests the condition-dependent essentiality predicted by the Flcon metric. |

Troubleshooting FBA Models: Common Pitfalls, Diagnostic Strategies, and Model Refinement

Diagnosing Ill-Posed Problems and Thermodynamic Infeasibilities

Within the broader thesis on Flux Balance Analysis (FBA) model reliability and confidence estimation, identifying and resolving ill-posed problems and thermodynamic infeasibilities is paramount. An FBA model is ill-posed when it lacks a unique or stable solution due to inadequate constraints or inherent redundancies. Thermodynamic infeasibility refers to solutions that violate the second law of thermodynamics, typically manifested as infeasible energy loops (Type III loops) that allow net energy generation without an input. These issues directly undermine the predictive reliability of metabolic models in research and industrial applications, such as drug target identification and metabolic engineering.

Core Concepts and Quantitative Data

| Source | Description | Typical Consequence |

|---|---|---|

| Under-constrained Network | Missing thermodynamic (ΔG) or flux capacity constraints. | Infinite solution space; non-unique flux distributions. |

| Redundant Constraints | Linearly dependent constraints in the stoichiometric matrix (S). | Numerical instability; solver failures. |

| Unbounded Objective | Objective function can increase indefinitely. | Unrealistically high predicted yields. |

| Blocked Reactions | Reactions that cannot carry flux under any condition. | Model predictions omit viable metabolic pathways. |

Table 2: Quantitative Indicators of Thermodynamic Infeasibility

| Indicator | Calculation | Feasible Threshold | ||

|---|---|---|---|---|

| Energy-Generating Cycle (EGC) Detection | ∑ ΔGi * vi < 0 for a closed loop (i). | Must be ≥ 0 for all loops. | ||

| Thermodynamic Consistency (TFA) | Feasibility of transformed primal problem with ΔG bounds. | Primal solution exists. | ||

| Max-Min Driving Force (MDF) | Maximize the minimum | ΔG | across all reactions. | Higher MDF suggests more robust feasibility. |

Diagnostic Methodologies & Experimental Protocols

Protocol: Detecting Energy-Generating Cycles (EGCs)

Objective: Identify thermodynamically infeasible cycles in a flux solution. Materials: Stoichiometric matrix (S), reaction free energy estimates (ΔG'°), measured fluxes (v). Procedure:

- Flux Variability Analysis (FVA): For a given growth rate, compute the minimum and maximum possible flux for each reaction.

- Cycle Identification: Use network topology algorithms (e.g., null space analysis of the stoichiometric matrix under steady-state) to identify closed loops capable of carrying flux.

- Thermodynamic Assessment: For each identified loop, calculate the net change in Gibbs free energy:

∑ (ΔG_i'° + RT ln(metabolite_concentration_i)) * v_i. A negative sum indicates an EGC. - Validation: Apply additional constraints (e.g., loopless constraints) and re-solve FBA. Elimination of the EGC confirms the diagnosis.

Protocol: Thermodynamic Flux Analysis (TFA) for Infeasibility Diagnosis

Objective: Reformulate FBA to explicitly incorporate thermodynamic constraints. Materials: Model in SBML format, estimated ΔG'° values, metabolite concentration ranges. Procedure:

- Transform Variables: Replace reaction flux (v_j) with two non-negative variables for forward and reverse directions.

- Apply Wegscheider Conditions: Introduce thermodynamic potential variables (μ) for metabolites. Constrain the difference in potentials between products and reactants to be equal to the reaction's ΔG.

- Set Bounds: Apply known ΔG'° and concentration ranges to constrain μ and ΔG.

- Solve: Perform FBA on the transformed problem. Infeasibility indicates a conflict between the flux solution and thermodynamic laws.

Protocol: Resolving Ill-Posedness via Model Regularization

Objective: Obtain a unique, biologically relevant solution from an under-constrained model. Materials: Core metabolic model, transcriptomic or proteomic data (optional). Procedure:

- Parsimonious FBA (pFBA): Solve a two-step optimization: first maximize biomass (or objective), then minimize the total sum of absolute fluxes subject to the optimal objective.

- Flux Sampling: Use Markov Chain Monte Carlo (MCMC) methods to uniformly sample the feasible solution space defined by constraints.

- Integrate Omics Data: Apply additional linear constraints derived from data (e.g., enzyme capacity limits from proteomics) to reduce the solution space.

- Sensitivity Analysis: Perturb model constraints and objective function to assess solution robustness.

Visualization of Core Concepts

Title: Sources of Ill-Posedness and Thermodynamic Infeasibility